News Wire Magazine shares stories of incredible entrepreneurs and leaders who are crushing it in life and business!

- SEO Friendly

- Instant Syndication

- No Bulk Emails

Standard

- Standard Distribution

- Rapid Approval

- Ad-Free

- Include Hyperlinks

- Unlimited Article Length

- Targeted Publication

- Social Share

Upgraded

$49

- Upgraded Distribution

- Rapid Approval

- Ad-Free

- Include Hyperlinks

- Unlimited Article Length

- Targeted Publication

- Social Share

- Google News

- Bing News

Premium

- Premium Distribution

- Rapid Approval

- Ad-Free

- Include Hyperlinks

- Unlimited Article Length

- Targeted Publication

- Social Share

- Google News

- Bing News

- Facebook Instant Articles

- Expert Article SEO

- Additional Manual Distribution

Daniel Gomez – Motivational Speaker, Business Coach & Corporate Trainer

Who is Daniel Gomez? Daniel Gomez has over a decade of experience in the corporate world as a speaker, business coach, corporate trainer, and executive…

Marketing Yourself As A Motivational Speaker – Get Paid To Speak

In 2018, I was just starting my career as a motivational speaker. I owned some retail stores and had given a few unpaid speeches at…

Ken Joslin is a Master of Building Confidence, Establishing Community, and Gaining Clarity!

Ken Joslin has a diverse background as both a former pastor and coach. In addition, he’s an incredibly successful real estate professional. Ken Joslin is also…

This Isn't Your Old Newswire

In the past, press release distribution services relied on sending news releases to major media outlets, radio stations, TV networks, and newspapers via email. Many companies still use this distribution method.

Press Release Distribution

Use News-Wire.com to distribute your press release. Free press releases are ad-supported, while the Standard, Upgraded, & Premium press release packages are ad-free!

Cutting-Edge Technology

More sophisticated newswire distribution companies also use RSS syndication to distribute press releases to thousands of relevant websites, blogs, and even RSS feed readers on smartphones, tablets, and computers.

Press Release Distribution

Hundreds of thousands of reporters, newspapers, television news stations, & radio newscasts receive their news stories from press release distribution services like News-Wire.com

Why Choose News Wire?

An American tech investor recently acquired News Wire. Once the acquisition was complete, this investor and a small team moved to a faster hosting platform running SSD drives instead of the older, slower HDD drives many hosting companies still use.

The News-Wire.com site was rebuilt from the ground up, focusing on speed, search engine ranking, and ease of use. In addition, the developers began adding numerous upgrades, including social media syndication and instant syndication to Google News & Bing news. The addition of RSS syndication gives entrepreneurs access to a more cost-effective way of distributing content to major media outlets!

Stop Using Outdated Press Release Distribution Services

PR marketing used to rely on competing to get your press release seen amongst hundreds of other news releases that were crowding media contacts’ email inbox each day.

Getting a story published by credible journalists was almost as tricky as hoping they even read the email they received from the press release distribution services that filled up the media contacts’ inbox. News Wire takes care of that for you with its established network of top-tier reporters and through instant syndication to relevant blogs, websites, and podcast hosts. In addition, many radio hosts have started using Android tablets or iPads with RSS feed readers that display these RSS feeds so they have relevant, timely talking points during their radio show.

Discover the best Press Release Distribution Service in 2021

A quick google search confirms many press release distribution companies are devoted to distributing newswires to a busy market of websites, platforms, and social networks. Lots of newswire distribution services promise to get your news noticed. So what are the best press release services?

News Wire isn’t the only press release distribution company to choose from, but we frequently hear we’re one of the best news services our customers have ever used.

News Wire Press Release Distribution Service

Submit a free press release with News-Wire.com or upgrade to a better package that fits your needs! Use free press release templates, or use our press release submission form to write, format, and edit your press release. Our online services make it easy to create newsworthy content without paying for press release writing services.

Do Press Releases Help SEO?

Here’s the easiest way to look at it.

Do press releases help generate back-links?

The short answer to this question is: Yes! A large part of optimizing your website for search engine ranking involves gaining relevant backlinks. As a result, your site is competing with others in your category. Backlinks count as a virtual vote for your site. All things being equal, the more relevant, quality sites link to yours, the higher it will rank. The press release is considered one of the best methods for creating those precious links!

With a press release, you can build these links much faster. You also make the highest-quality links through a distribution service that understands your needs. Journalists and broadcast agencies choose relevant press releases for their audiences, ensuring you get your information in front of your target demographic. However, if your press release is too short or full of low-quality writing, reporters will not be interested in using your information for their news coverage.

Use News-Wire.com to distribute your press release effectively and affordably! Our free press releases are ad-supported, while the standard, upgraded, and premium press release packages are ad-free! With News-Wire, you have tons of options to make the best choice for your press release!

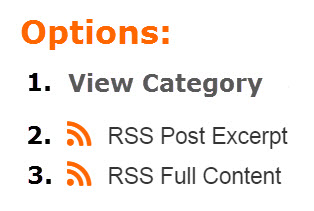

We include each press release published by News-Wire.com in the relevant RSS Post Excerpt feeds & RSS Full Content feeds used by online news sites, blogs, and websites to syndicate content to their sites automatically!

Frequently asked questions

News releases are an essential and relevant tool for any business. News-Wire.com is a press release distribution service that can help you find the perfect category for your story, format your document online, add relevant images, and distribute to journalists who will be interested in your story! News Wire is the best price point for any startup, small business, or entrepreneur! Publicize your business. News Wire can make you a winner.

Many reporters use newswire syndication services as a primary source for their news stories. Because of this, it’s likely that if you submit a press release on News Wire, it will go directly to highly talented journalists! With News-Wire.com, you can publish and distribute press releases without paying expensive fees! News Wire is FREE to use, and we provide you with a free press release as well as other ad-supported features! News Wire provides you with all the value and benefits of a free press release distribution service, but News Wire also offers paid options as well as various upgrade features to take your campaign to the next level!

Press Releases work because they help drive traffic and recognition to businesses, events, or causes that might not otherwise receive them on their own. News releases can be used as an SEO strategy by including keywords within the text, which increases visibility on search engines such as Google, Bing & Yahoo! The more people who see your press release, the greater chance someone will make contact with you about your story!

You submit news releases to inform the public about a company’s activities and achievements. News releases can help create awareness of your brand and products in the marketplace, which is essential for SEO. News releases also provide journalists with information that they may use when writing articles about your business or industry. Journalists often include links to news releases when citing sources in their writings. News release distribution services can provide you with a platform to distribute these news stories to media outlets worldwide, generating more exposure for you and your business.

News releases or press releases are a great way to get the word out about your product, service, organization, event, or anything else happening in your business sector. In addition, news releases gain credibility and recognition with the media and reporters who receive them and helping to increase web traffic via links back to your website from news outlets. There also is no cost for sending out a release, so there’s no reason not to do it!

News Wire Press Release Distribution Packages

News-Wire.com helps small businesses and entrepreneurs handle their newswire distribution needs efficiently. With News Wire, you have the option to schedule your press releases and have them distributed to thousands of online media outlets.

Unlike many other press release delivery options, News-Wire offers a diverse range of packages to aid you. The following are the packages offered by News-Wire.com:

A press release is a statement or announcement issued to the news media and other interested parties. Organizations issue press releases for a variety of reasons – to promote an event, product, service, or initiative; to gain attention for a cause or issue; or to simply provide information to the media. A well-written press release is vital to getting your news noticed by reporters and editors at news outlets. News Wire offers multiple press release distribution packages!

The premium package is the highest-quality package offered by News-Wire.com to provide elite services for a large business enterprise. It is also an ad-free package and provides the most extensive reach of all the distribution services we offer. You can check out the detailed features and pricing of the Premium Package and decide if this package is the best suited for your needs.

The Upgraded Package from News Wire is also free of ads and provides the same services as the other two packages and more. You can check out our pricing and features for the upgraded package to know more about it. The Upgraded Package is ideal for larger businesses but might not be the best option for startups. If you have a small business, the Basic or Standard package would probably be a better fit.

Submitting a press release online has never been so easy! News-Wire.com provides this standard package for businesses who are looking for an ad-free package that’s budget-friendly. This package has low rates, but you get faster and more extensive distribution networks than other popular press release distributors. So free yourself from emailing all those media sites and journalists who never respond. With our Standard Package, you leave the responsibility of distributing your press release to us!

Do you know how to submit a press release for free? If you have ever tried submitting a press release online, you are aware of the challenges involved. Finding a trustworthy press release distribution service can be a significant hurdle. This post aims to introduce you to one of the most effective yet affordable press release distribution services out there, known as News-Wire.

With News-Wire.com, you can distribute and publish your PR articles, information, and reports directly to online media outlets. By distributing your press release, you get a chance to increase your brand awareness and boost the value of your business! You can now get both free and paid high-quality press release distribution services from News-Wire.

Why are Press Release Distribution Services good for your business?

You need a newswire service for your business for plenty of reasons, including spreading the word about your agency or business, media announcements, increasing visibility of your activity on online platforms, and building trust with your potential customers. In addition, we recommend you use a trustworthy press release service for announcing significant company changes, launching new products, new hires, change of name and location, etc.

If you are not sure how to submit a press release for free, then we have got your back. This post will talk about all the services and packages that News Wire offers to help you get started.

Press Release Distribution Customization

As you can see, News Wire cares about users from all walks of life. Whether you are a small firm or a big business, News-Wire.com has the right press release distribution solution for you! In addition, our extensive network offers comprehensive coverage to all the critical news outlets, including Yahoo News and Google News.

At News Wire, you can find the best newswire distribution service for your needs and customize your favorite package. We assure you that once you use our services, you will keep on returning for more!

Why should I submit a press release?

The purposes of publishing a report are to generate press coverage. 71% of journalists see news releases as their favorite type of content received from brands. Gaining press coverage is an essential part of getting your business or brand in a public context. It helps to build brand awareness and allows journalists to share information that they think readers will love. The distribution of the press release has several potential advantages. Getting press coverage is an excellent way to market your company to the public and get your name and brand in the spotlight.

News Wire Meaning

A wire service, or newswire, is used by journalists and editors to get the latest news fast. Wire services broadcast their content via satellite, fax

Are Press Releases Still Relevant? – Check This Out!

In the past, press releases used to be very expensive and time-consuming to produce. In today’s social media-driven world, press releases are still relevant; however,

5 Steps: How To Write A Great Press Release That Gets Media Coverage

If you’re looking to get media coverage, a press release is an essential marketing tool. But how do you write one that actually gets picked

What Experts Are Saying

Today's Top News

Bart Nollenberger – Empowering Sales Professionals & Building Stronger Leaders

Bart Nollenberger is a driven and passionate motivational speaker, sales trainer, author, and executive coach. He’s a John Maxwell Executive Director and a master of

Web3 Gaming Accelerator ICC Camp Launches Incubation with a Star-Studded Lineup of Mentors

Hong Kong, January 9, 2024 — Web3 games represent a new generation of games built on blockchain technology and decentralized principles. Paving the way for Web3 into mainstream markets, Web3 games attracts not only the native Web3 industry but is also a strategic breakthrough eagerly anticipated by traditional game entrepreneurs. On January 5, ICC Camp

BellaVeneers Snap-on Veneers Give You a Perfect Smile Without Grinding Teeth!

A radiant, confident smile crafts unforgettable first impressions, opens doors to opportunities, and fosters invaluable connections. Much like a sculpted physique speaks to discipline and

© 1997-2023 News-Wire.com